|

11/30/2023 0 Comments Qemu img

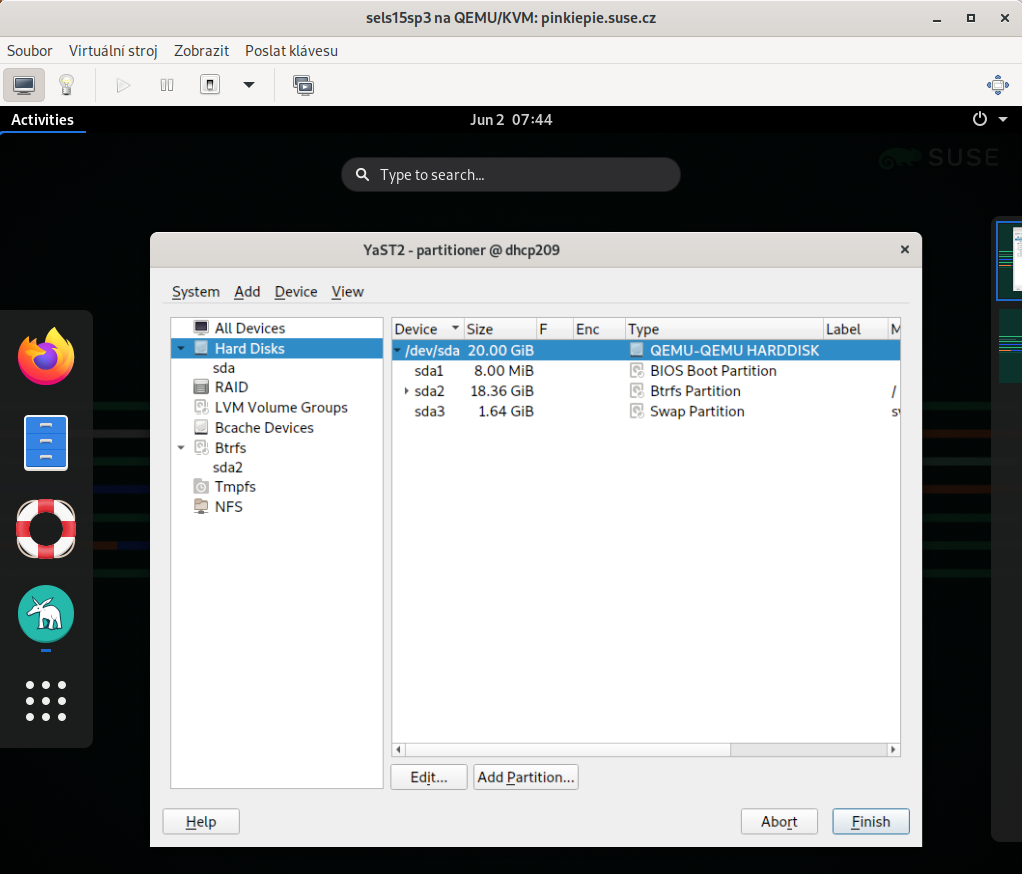

¶Ī CentOS8 Stream qcow2 image is used and with a root password and public key injected for easy access: wget VM Deploymentĭeploying and booting a QEMU image with RBD is fairly straightforward once you know what to do. This setup better mimics what users might see on a small but realisticly provisioned NVMe backed Ceph cluster. Still, further testing was performed on the 5 node, 30 OSD configuration. Both librbd and kernel-rbd performed nearly as well on the 5 OSD setup. Kernel-RBD performed very well when reading from a single OSD, but Librbd in the full 30 OSD configuration achieved the highest performance at just over 122K IOPS. Instead cache=none must be passed explicitly via qemu-kvm's drive section. Disabling RBD cache at the cluster level will be respected by fio using the librbd engine, but will not be respected by QEMU/KVM's librbd driver.As it's very easy to recreate clusters and run multiple baseline tests with CBT, several different cluster sizes were tested to get baseline results for both the librbd engine and the libaio engine on top of kernel-rbd.

After cluster creation, CBT was configured to create a 6TB RBD volume using fio with the librbd engine, then perform 16KB random reads via fio with iodepth=128 for 5 minutes. Primarily, rbd cache was disabled(1), each OSD was given an 8GB OSD memory traget, and msgr V1 was used with cephx disabled for the initial testing (but cephx was enabled using msgr V2 in secure mode for later tests).

Baseline TestingĬBT was configured to deploy Ceph with a couple of modified settings vs stock. Before configuring the VM though, several test clusters were built with CBT and a test workload was run using fio's librbd engine to get a baseline result. One of the remaining nodes in the cluster was used to serve as the VM host. The expected aggregate performance of this setup is around 1M random read IOPs and at least 250K random write IOPS (after 3x replication) which should be enough to test the QEMU/KVM performance of a single VM. Ultimately 5 nodes were used to serve as OSD hosts with 30 NVMe backed OSDs in aggregate. While the cluster has 10 nodes, various configurations were evaluated before deciding on a final setup. Qemu-kvm-6.2.0-20.module_el8.7.0+1218+f626c2ff.1Īll nodes are located on the same Juniper QFX5200 switch and connected with a single 100GbE QSFP28 link. Finally, thank you to QEMU maintainer Stefan Hajnoczi for providing expertise regarding QEMU/KVM and reviewing a draft copy of this post. Thank you to Adam Emerson and everyone else on the Ceph team who work on the client side performance improvements that made these results possible. Thank you to Red Hat and Samsung for providing the Ceph community with the hardware used for this testing. Read on to find out just how fast QEMU/KVM was able to perform when utilizing Ceph's librbd driver.

In this case, the request was to drive a single QEMU/KVM VM backed by librbd with a high amount of concurrent IO and see how fast it can go. That might mean testing the latency of single OSD in isolation with syncronous IO via librbd, or using lots of clients with high IO depth to throw a huge amount of IO at a cluster of OSDs on bare metal. Typically Ceph engineers try isolate the performance of specific components by removing bottlenecks at higher levels in the stack. While VM benchmarks have been collected in the past, there were no recent numbers from a high performing setup. The Ceph team was recently asked what the highest performing QEMU/KVM setup that has ever been tested with librbd is.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed